Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- How to Write a Literature Review | Guide, Examples, & Templates

How to Write a Literature Review | Guide, Examples, & Templates

Published on January 2, 2023 by Shona McCombes . Revised on September 11, 2023.

What is a literature review? A literature review is a survey of scholarly sources on a specific topic. It provides an overview of current knowledge, allowing you to identify relevant theories, methods, and gaps in the existing research that you can later apply to your paper, thesis, or dissertation topic .

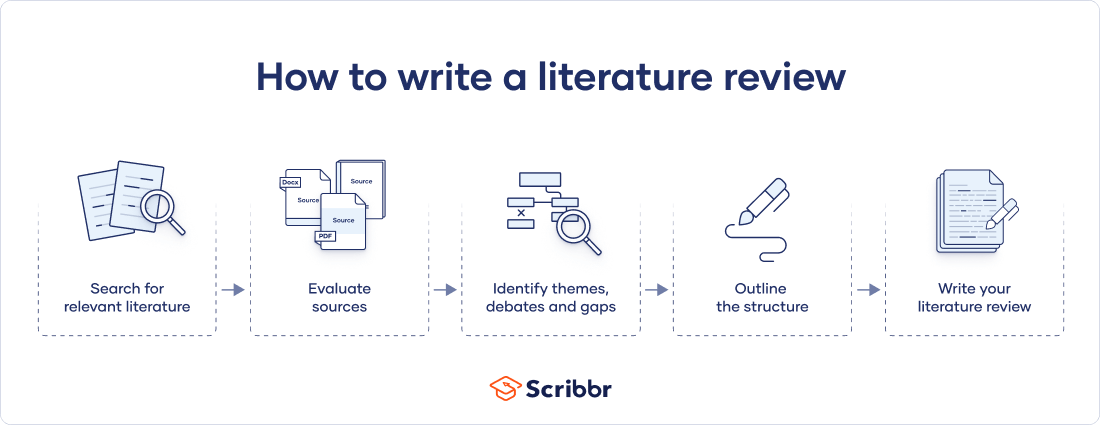

There are five key steps to writing a literature review:

- Search for relevant literature

- Evaluate sources

- Identify themes, debates, and gaps

- Outline the structure

- Write your literature review

A good literature review doesn’t just summarize sources—it analyzes, synthesizes , and critically evaluates to give a clear picture of the state of knowledge on the subject.

Instantly correct all language mistakes in your text

Upload your document to correct all your mistakes in minutes

Table of contents

What is the purpose of a literature review, examples of literature reviews, step 1 – search for relevant literature, step 2 – evaluate and select sources, step 3 – identify themes, debates, and gaps, step 4 – outline your literature review’s structure, step 5 – write your literature review, free lecture slides, other interesting articles, frequently asked questions, introduction.

- Quick Run-through

- Step 1 & 2

When you write a thesis , dissertation , or research paper , you will likely have to conduct a literature review to situate your research within existing knowledge. The literature review gives you a chance to:

- Demonstrate your familiarity with the topic and its scholarly context

- Develop a theoretical framework and methodology for your research

- Position your work in relation to other researchers and theorists

- Show how your research addresses a gap or contributes to a debate

- Evaluate the current state of research and demonstrate your knowledge of the scholarly debates around your topic.

Writing literature reviews is a particularly important skill if you want to apply for graduate school or pursue a career in research. We’ve written a step-by-step guide that you can follow below.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

Writing literature reviews can be quite challenging! A good starting point could be to look at some examples, depending on what kind of literature review you’d like to write.

- Example literature review #1: “Why Do People Migrate? A Review of the Theoretical Literature” ( Theoretical literature review about the development of economic migration theory from the 1950s to today.)

- Example literature review #2: “Literature review as a research methodology: An overview and guidelines” ( Methodological literature review about interdisciplinary knowledge acquisition and production.)

- Example literature review #3: “The Use of Technology in English Language Learning: A Literature Review” ( Thematic literature review about the effects of technology on language acquisition.)

- Example literature review #4: “Learners’ Listening Comprehension Difficulties in English Language Learning: A Literature Review” ( Chronological literature review about how the concept of listening skills has changed over time.)

You can also check out our templates with literature review examples and sample outlines at the links below.

Download Word doc Download Google doc

Before you begin searching for literature, you need a clearly defined topic .

If you are writing the literature review section of a dissertation or research paper, you will search for literature related to your research problem and questions .

Make a list of keywords

Start by creating a list of keywords related to your research question. Include each of the key concepts or variables you’re interested in, and list any synonyms and related terms. You can add to this list as you discover new keywords in the process of your literature search.

- Social media, Facebook, Instagram, Twitter, Snapchat, TikTok

- Body image, self-perception, self-esteem, mental health

- Generation Z, teenagers, adolescents, youth

Search for relevant sources

Use your keywords to begin searching for sources. Some useful databases to search for journals and articles include:

- Your university’s library catalogue

- Google Scholar

- Project Muse (humanities and social sciences)

- Medline (life sciences and biomedicine)

- EconLit (economics)

- Inspec (physics, engineering and computer science)

You can also use boolean operators to help narrow down your search.

Make sure to read the abstract to find out whether an article is relevant to your question. When you find a useful book or article, you can check the bibliography to find other relevant sources.

You likely won’t be able to read absolutely everything that has been written on your topic, so it will be necessary to evaluate which sources are most relevant to your research question.

For each publication, ask yourself:

- What question or problem is the author addressing?

- What are the key concepts and how are they defined?

- What are the key theories, models, and methods?

- Does the research use established frameworks or take an innovative approach?

- What are the results and conclusions of the study?

- How does the publication relate to other literature in the field? Does it confirm, add to, or challenge established knowledge?

- What are the strengths and weaknesses of the research?

Make sure the sources you use are credible , and make sure you read any landmark studies and major theories in your field of research.

You can use our template to summarize and evaluate sources you’re thinking about using. Click on either button below to download.

Take notes and cite your sources

As you read, you should also begin the writing process. Take notes that you can later incorporate into the text of your literature review.

It is important to keep track of your sources with citations to avoid plagiarism . It can be helpful to make an annotated bibliography , where you compile full citation information and write a paragraph of summary and analysis for each source. This helps you remember what you read and saves time later in the process.

The only proofreading tool specialized in correcting academic writing - try for free!

The academic proofreading tool has been trained on 1000s of academic texts and by native English editors. Making it the most accurate and reliable proofreading tool for students.

Try for free

To begin organizing your literature review’s argument and structure, be sure you understand the connections and relationships between the sources you’ve read. Based on your reading and notes, you can look for:

- Trends and patterns (in theory, method or results): do certain approaches become more or less popular over time?

- Themes: what questions or concepts recur across the literature?

- Debates, conflicts and contradictions: where do sources disagree?

- Pivotal publications: are there any influential theories or studies that changed the direction of the field?

- Gaps: what is missing from the literature? Are there weaknesses that need to be addressed?

This step will help you work out the structure of your literature review and (if applicable) show how your own research will contribute to existing knowledge.

- Most research has focused on young women.

- There is an increasing interest in the visual aspects of social media.

- But there is still a lack of robust research on highly visual platforms like Instagram and Snapchat—this is a gap that you could address in your own research.

There are various approaches to organizing the body of a literature review. Depending on the length of your literature review, you can combine several of these strategies (for example, your overall structure might be thematic, but each theme is discussed chronologically).

Chronological

The simplest approach is to trace the development of the topic over time. However, if you choose this strategy, be careful to avoid simply listing and summarizing sources in order.

Try to analyze patterns, turning points and key debates that have shaped the direction of the field. Give your interpretation of how and why certain developments occurred.

If you have found some recurring central themes, you can organize your literature review into subsections that address different aspects of the topic.

For example, if you are reviewing literature about inequalities in migrant health outcomes, key themes might include healthcare policy, language barriers, cultural attitudes, legal status, and economic access.

Methodological

If you draw your sources from different disciplines or fields that use a variety of research methods , you might want to compare the results and conclusions that emerge from different approaches. For example:

- Look at what results have emerged in qualitative versus quantitative research

- Discuss how the topic has been approached by empirical versus theoretical scholarship

- Divide the literature into sociological, historical, and cultural sources

Theoretical

A literature review is often the foundation for a theoretical framework . You can use it to discuss various theories, models, and definitions of key concepts.

You might argue for the relevance of a specific theoretical approach, or combine various theoretical concepts to create a framework for your research.

Like any other academic text , your literature review should have an introduction , a main body, and a conclusion . What you include in each depends on the objective of your literature review.

The introduction should clearly establish the focus and purpose of the literature review.

Depending on the length of your literature review, you might want to divide the body into subsections. You can use a subheading for each theme, time period, or methodological approach.

As you write, you can follow these tips:

- Summarize and synthesize: give an overview of the main points of each source and combine them into a coherent whole

- Analyze and interpret: don’t just paraphrase other researchers — add your own interpretations where possible, discussing the significance of findings in relation to the literature as a whole

- Critically evaluate: mention the strengths and weaknesses of your sources

- Write in well-structured paragraphs: use transition words and topic sentences to draw connections, comparisons and contrasts

In the conclusion, you should summarize the key findings you have taken from the literature and emphasize their significance.

When you’ve finished writing and revising your literature review, don’t forget to proofread thoroughly before submitting. Not a language expert? Check out Scribbr’s professional proofreading services !

This article has been adapted into lecture slides that you can use to teach your students about writing a literature review.

Scribbr slides are free to use, customize, and distribute for educational purposes.

Open Google Slides Download PowerPoint

If you want to know more about the research process , methodology , research bias , or statistics , make sure to check out some of our other articles with explanations and examples.

- Sampling methods

- Simple random sampling

- Stratified sampling

- Cluster sampling

- Likert scales

- Reproducibility

Statistics

- Null hypothesis

- Statistical power

- Probability distribution

- Effect size

- Poisson distribution

Research bias

- Optimism bias

- Cognitive bias

- Implicit bias

- Hawthorne effect

- Anchoring bias

- Explicit bias

A literature review is a survey of scholarly sources (such as books, journal articles, and theses) related to a specific topic or research question .

It is often written as part of a thesis, dissertation , or research paper , in order to situate your work in relation to existing knowledge.

There are several reasons to conduct a literature review at the beginning of a research project:

- To familiarize yourself with the current state of knowledge on your topic

- To ensure that you’re not just repeating what others have already done

- To identify gaps in knowledge and unresolved problems that your research can address

- To develop your theoretical framework and methodology

- To provide an overview of the key findings and debates on the topic

Writing the literature review shows your reader how your work relates to existing research and what new insights it will contribute.

The literature review usually comes near the beginning of your thesis or dissertation . After the introduction , it grounds your research in a scholarly field and leads directly to your theoretical framework or methodology .

A literature review is a survey of credible sources on a topic, often used in dissertations , theses, and research papers . Literature reviews give an overview of knowledge on a subject, helping you identify relevant theories and methods, as well as gaps in existing research. Literature reviews are set up similarly to other academic texts , with an introduction , a main body, and a conclusion .

An annotated bibliography is a list of source references that has a short description (called an annotation ) for each of the sources. It is often assigned as part of the research process for a paper .

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

McCombes, S. (2023, September 11). How to Write a Literature Review | Guide, Examples, & Templates. Scribbr. Retrieved April 15, 2024, from https://www.scribbr.com/dissertation/literature-review/

Is this article helpful?

Shona McCombes

Other students also liked, what is a theoretical framework | guide to organizing, what is a research methodology | steps & tips, how to write a research proposal | examples & templates, what is your plagiarism score.

Research Methods

- Getting Started

- Literature Review Research

- Research Design

- Research Design By Discipline

- SAGE Research Methods

- Teaching with SAGE Research Methods

Literature Review

- What is a Literature Review?

- What is NOT a Literature Review?

- Purposes of a Literature Review

- Types of Literature Reviews

- Literature Reviews vs. Systematic Reviews

- Systematic vs. Meta-Analysis

Literature Review is a comprehensive survey of the works published in a particular field of study or line of research, usually over a specific period of time, in the form of an in-depth, critical bibliographic essay or annotated list in which attention is drawn to the most significant works.

Also, we can define a literature review as the collected body of scholarly works related to a topic:

- Summarizes and analyzes previous research relevant to a topic

- Includes scholarly books and articles published in academic journals

- Can be an specific scholarly paper or a section in a research paper

The objective of a Literature Review is to find previous published scholarly works relevant to an specific topic

- Help gather ideas or information

- Keep up to date in current trends and findings

- Help develop new questions

A literature review is important because it:

- Explains the background of research on a topic.

- Demonstrates why a topic is significant to a subject area.

- Helps focus your own research questions or problems

- Discovers relationships between research studies/ideas.

- Suggests unexplored ideas or populations

- Identifies major themes, concepts, and researchers on a topic.

- Tests assumptions; may help counter preconceived ideas and remove unconscious bias.

- Identifies critical gaps, points of disagreement, or potentially flawed methodology or theoretical approaches.

- Indicates potential directions for future research.

All content in this section is from Literature Review Research from Old Dominion University

Keep in mind the following, a literature review is NOT:

Not an essay

Not an annotated bibliography in which you summarize each article that you have reviewed. A literature review goes beyond basic summarizing to focus on the critical analysis of the reviewed works and their relationship to your research question.

Not a research paper where you select resources to support one side of an issue versus another. A lit review should explain and consider all sides of an argument in order to avoid bias, and areas of agreement and disagreement should be highlighted.

A literature review serves several purposes. For example, it

- provides thorough knowledge of previous studies; introduces seminal works.

- helps focus one’s own research topic.

- identifies a conceptual framework for one’s own research questions or problems; indicates potential directions for future research.

- suggests previously unused or underused methodologies, designs, quantitative and qualitative strategies.

- identifies gaps in previous studies; identifies flawed methodologies and/or theoretical approaches; avoids replication of mistakes.

- helps the researcher avoid repetition of earlier research.

- suggests unexplored populations.

- determines whether past studies agree or disagree; identifies controversy in the literature.

- tests assumptions; may help counter preconceived ideas and remove unconscious bias.

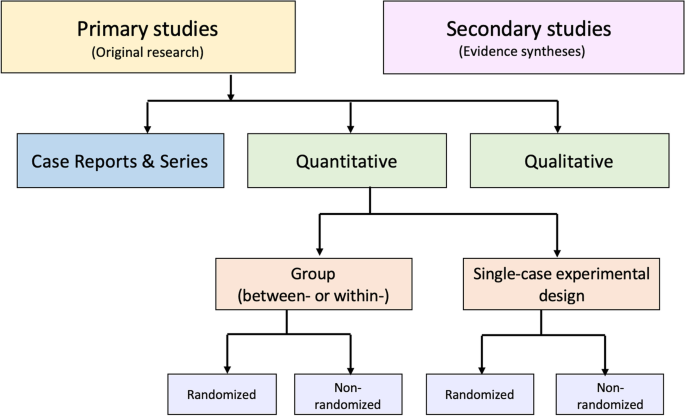

As Kennedy (2007) notes*, it is important to think of knowledge in a given field as consisting of three layers. First, there are the primary studies that researchers conduct and publish. Second are the reviews of those studies that summarize and offer new interpretations built from and often extending beyond the original studies. Third, there are the perceptions, conclusions, opinion, and interpretations that are shared informally that become part of the lore of field. In composing a literature review, it is important to note that it is often this third layer of knowledge that is cited as "true" even though it often has only a loose relationship to the primary studies and secondary literature reviews.

Given this, while literature reviews are designed to provide an overview and synthesis of pertinent sources you have explored, there are several approaches to how they can be done, depending upon the type of analysis underpinning your study. Listed below are definitions of types of literature reviews:

Argumentative Review This form examines literature selectively in order to support or refute an argument, deeply imbedded assumption, or philosophical problem already established in the literature. The purpose is to develop a body of literature that establishes a contrarian viewpoint. Given the value-laden nature of some social science research [e.g., educational reform; immigration control], argumentative approaches to analyzing the literature can be a legitimate and important form of discourse. However, note that they can also introduce problems of bias when they are used to to make summary claims of the sort found in systematic reviews.

Integrative Review Considered a form of research that reviews, critiques, and synthesizes representative literature on a topic in an integrated way such that new frameworks and perspectives on the topic are generated. The body of literature includes all studies that address related or identical hypotheses. A well-done integrative review meets the same standards as primary research in regard to clarity, rigor, and replication.

Historical Review Few things rest in isolation from historical precedent. Historical reviews are focused on examining research throughout a period of time, often starting with the first time an issue, concept, theory, phenomena emerged in the literature, then tracing its evolution within the scholarship of a discipline. The purpose is to place research in a historical context to show familiarity with state-of-the-art developments and to identify the likely directions for future research.

Methodological Review A review does not always focus on what someone said [content], but how they said it [method of analysis]. This approach provides a framework of understanding at different levels (i.e. those of theory, substantive fields, research approaches and data collection and analysis techniques), enables researchers to draw on a wide variety of knowledge ranging from the conceptual level to practical documents for use in fieldwork in the areas of ontological and epistemological consideration, quantitative and qualitative integration, sampling, interviewing, data collection and data analysis, and helps highlight many ethical issues which we should be aware of and consider as we go through our study.

Systematic Review This form consists of an overview of existing evidence pertinent to a clearly formulated research question, which uses pre-specified and standardized methods to identify and critically appraise relevant research, and to collect, report, and analyse data from the studies that are included in the review. Typically it focuses on a very specific empirical question, often posed in a cause-and-effect form, such as "To what extent does A contribute to B?"

Theoretical Review The purpose of this form is to concretely examine the corpus of theory that has accumulated in regard to an issue, concept, theory, phenomena. The theoretical literature review help establish what theories already exist, the relationships between them, to what degree the existing theories have been investigated, and to develop new hypotheses to be tested. Often this form is used to help establish a lack of appropriate theories or reveal that current theories are inadequate for explaining new or emerging research problems. The unit of analysis can focus on a theoretical concept or a whole theory or framework.

* Kennedy, Mary M. "Defining a Literature." Educational Researcher 36 (April 2007): 139-147.

All content in this section is from The Literature Review created by Dr. Robert Larabee USC

Robinson, P. and Lowe, J. (2015), Literature reviews vs systematic reviews. Australian and New Zealand Journal of Public Health, 39: 103-103. doi: 10.1111/1753-6405.12393

What's in the name? The difference between a Systematic Review and a Literature Review, and why it matters . By Lynn Kysh from University of Southern California

Systematic review or meta-analysis?

A systematic review answers a defined research question by collecting and summarizing all empirical evidence that fits pre-specified eligibility criteria.

A meta-analysis is the use of statistical methods to summarize the results of these studies.

Systematic reviews, just like other research articles, can be of varying quality. They are a significant piece of work (the Centre for Reviews and Dissemination at York estimates that a team will take 9-24 months), and to be useful to other researchers and practitioners they should have:

- clearly stated objectives with pre-defined eligibility criteria for studies

- explicit, reproducible methodology

- a systematic search that attempts to identify all studies

- assessment of the validity of the findings of the included studies (e.g. risk of bias)

- systematic presentation, and synthesis, of the characteristics and findings of the included studies

Not all systematic reviews contain meta-analysis.

Meta-analysis is the use of statistical methods to summarize the results of independent studies. By combining information from all relevant studies, meta-analysis can provide more precise estimates of the effects of health care than those derived from the individual studies included within a review. More information on meta-analyses can be found in Cochrane Handbook, Chapter 9 .

A meta-analysis goes beyond critique and integration and conducts secondary statistical analysis on the outcomes of similar studies. It is a systematic review that uses quantitative methods to synthesize and summarize the results.

An advantage of a meta-analysis is the ability to be completely objective in evaluating research findings. Not all topics, however, have sufficient research evidence to allow a meta-analysis to be conducted. In that case, an integrative review is an appropriate strategy.

Some of the content in this section is from Systematic reviews and meta-analyses: step by step guide created by Kate McAllister.

- << Previous: Getting Started

- Next: Research Design >>

- Last Updated: Aug 21, 2023 4:07 PM

- URL: https://guides.lib.udel.edu/researchmethods

Encyclopedia of Evidence in Pharmaceutical Public Health and Health Services Research in Pharmacy pp 1–15 Cite as

Methodological Approaches to Literature Review

- Dennis Thomas 2 ,

- Elida Zairina 3 &

- Johnson George 4

- Living reference work entry

- First Online: 09 May 2023

453 Accesses

The literature review can serve various functions in the contexts of education and research. It aids in identifying knowledge gaps, informing research methodology, and developing a theoretical framework during the planning stages of a research study or project, as well as reporting of review findings in the context of the existing literature. This chapter discusses the methodological approaches to conducting a literature review and offers an overview of different types of reviews. There are various types of reviews, including narrative reviews, scoping reviews, and systematic reviews with reporting strategies such as meta-analysis and meta-synthesis. Review authors should consider the scope of the literature review when selecting a type and method. Being focused is essential for a successful review; however, this must be balanced against the relevance of the review to a broad audience.

This is a preview of subscription content, log in via an institution .

Akobeng AK. Principles of evidence based medicine. Arch Dis Child. 2005;90(8):837–40.

Article CAS PubMed PubMed Central Google Scholar

Alharbi A, Stevenson M. Refining Boolean queries to identify relevant studies for systematic review updates. J Am Med Inform Assoc. 2020;27(11):1658–66.

Article PubMed PubMed Central Google Scholar

Arksey H, O’Malley L. Scoping studies: towards a methodological framework. Int J Soc Res Methodol. 2005;8(1):19–32.

Article Google Scholar

Aromataris E MZE. JBI manual for evidence synthesis. 2020.

Google Scholar

Aromataris E, Pearson A. The systematic review: an overview. Am J Nurs. 2014;114(3):53–8.

Article PubMed Google Scholar

Aromataris E, Riitano D. Constructing a search strategy and searching for evidence. A guide to the literature search for a systematic review. Am J Nurs. 2014;114(5):49–56.

Babineau J. Product review: covidence (systematic review software). J Canad Health Libr Assoc Canada. 2014;35(2):68–71.

Baker JD. The purpose, process, and methods of writing a literature review. AORN J. 2016;103(3):265–9.

Bastian H, Glasziou P, Chalmers I. Seventy-five trials and eleven systematic reviews a day: how will we ever keep up? PLoS Med. 2010;7(9):e1000326.

Bramer WM, Rethlefsen ML, Kleijnen J, Franco OH. Optimal database combinations for literature searches in systematic reviews: a prospective exploratory study. Syst Rev. 2017;6(1):1–12.

Brown D. A review of the PubMed PICO tool: using evidence-based practice in health education. Health Promot Pract. 2020;21(4):496–8.

Cargo M, Harris J, Pantoja T, et al. Cochrane qualitative and implementation methods group guidance series – paper 4: methods for assessing evidence on intervention implementation. J Clin Epidemiol. 2018;97:59–69.

Cook DJ, Mulrow CD, Haynes RB. Systematic reviews: synthesis of best evidence for clinical decisions. Ann Intern Med. 1997;126(5):376–80.

Article CAS PubMed Google Scholar

Counsell C. Formulating questions and locating primary studies for inclusion in systematic reviews. Ann Intern Med. 1997;127(5):380–7.

Cummings SR, Browner WS, Hulley SB. Conceiving the research question and developing the study plan. In: Cummings SR, Browner WS, Hulley SB, editors. Designing Clinical Research: An Epidemiological Approach. 4th ed. Philadelphia (PA): P Lippincott Williams & Wilkins; 2007. p. 14–22.

Eriksen MB, Frandsen TF. The impact of patient, intervention, comparison, outcome (PICO) as a search strategy tool on literature search quality: a systematic review. JMLA. 2018;106(4):420.

Ferrari R. Writing narrative style literature reviews. Medical Writing. 2015;24(4):230–5.

Flemming K, Booth A, Hannes K, Cargo M, Noyes J. Cochrane qualitative and implementation methods group guidance series – paper 6: reporting guidelines for qualitative, implementation, and process evaluation evidence syntheses. J Clin Epidemiol. 2018;97:79–85.

Grant MJ, Booth A. A typology of reviews: an analysis of 14 review types and associated methodologies. Health Inf Libr J. 2009;26(2):91–108.

Green BN, Johnson CD, Adams A. Writing narrative literature reviews for peer-reviewed journals: secrets of the trade. J Chiropr Med. 2006;5(3):101–17.

Gregory AT, Denniss AR. An introduction to writing narrative and systematic reviews; tasks, tips and traps for aspiring authors. Heart Lung Circ. 2018;27(7):893–8.

Harden A, Thomas J, Cargo M, et al. Cochrane qualitative and implementation methods group guidance series – paper 5: methods for integrating qualitative and implementation evidence within intervention effectiveness reviews. J Clin Epidemiol. 2018;97:70–8.

Harris JL, Booth A, Cargo M, et al. Cochrane qualitative and implementation methods group guidance series – paper 2: methods for question formulation, searching, and protocol development for qualitative evidence synthesis. J Clin Epidemiol. 2018;97:39–48.

Higgins J, Thomas J. In: Chandler J, Cumpston M, Li T, Page MJ, Welch VA, editors. Cochrane Handbook for Systematic Reviews of Interventions version 6.3, updated February 2022). Available from www.training.cochrane.org/handbook.: Cochrane; 2022.

International prospective register of systematic reviews (PROSPERO). Available from https://www.crd.york.ac.uk/prospero/ .

Khan KS, Kunz R, Kleijnen J, Antes G. Five steps to conducting a systematic review. J R Soc Med. 2003;96(3):118–21.

Landhuis E. Scientific literature: information overload. Nature. 2016;535(7612):457–8.

Lockwood C, Porritt K, Munn Z, Rittenmeyer L, Salmond S, Bjerrum M, Loveday H, Carrier J, Stannard D. Chapter 2: Systematic reviews of qualitative evidence. In: Aromataris E, Munn Z, editors. JBI Manual for Evidence Synthesis. JBI; 2020. Available from https://synthesismanual.jbi.global . https://doi.org/10.46658/JBIMES-20-03 .

Chapter Google Scholar

Lorenzetti DL, Topfer L-A, Dennett L, Clement F. Value of databases other than medline for rapid health technology assessments. Int J Technol Assess Health Care. 2014;30(2):173–8.

Moher D, Liberati A, Tetzlaff J, Altman DG, the PRISMA Group. Preferred reporting items for (SR) and meta-analyses: the PRISMA statement. Ann Intern Med. 2009;6:264–9.

Mulrow CD. Systematic reviews: rationale for systematic reviews. BMJ. 1994;309(6954):597–9.

Munn Z, Peters MDJ, Stern C, Tufanaru C, McArthur A, Aromataris E. Systematic review or scoping review? Guidance for authors when choosing between a systematic or scoping review approach. BMC Med Res Methodol. 2018;18(1):143.

Munthe-Kaas HM, Glenton C, Booth A, Noyes J, Lewin S. Systematic mapping of existing tools to appraise methodological strengths and limitations of qualitative research: first stage in the development of the CAMELOT tool. BMC Med Res Methodol. 2019;19(1):1–13.

Murphy CM. Writing an effective review article. J Med Toxicol. 2012;8(2):89–90.

NHMRC. Guidelines for guidelines: assessing risk of bias. Available at https://nhmrc.gov.au/guidelinesforguidelines/develop/assessing-risk-bias . Last published 29 August 2019. Accessed 29 Aug 2022.

Noyes J, Booth A, Cargo M, et al. Cochrane qualitative and implementation methods group guidance series – paper 1: introduction. J Clin Epidemiol. 2018b;97:35–8.

Noyes J, Booth A, Flemming K, et al. Cochrane qualitative and implementation methods group guidance series – paper 3: methods for assessing methodological limitations, data extraction and synthesis, and confidence in synthesized qualitative findings. J Clin Epidemiol. 2018a;97:49–58.

Noyes J, Booth A, Moore G, Flemming K, Tunçalp Ö, Shakibazadeh E. Synthesising quantitative and qualitative evidence to inform guidelines on complex interventions: clarifying the purposes, designs and outlining some methods. BMJ Glob Health. 2019;4(Suppl 1):e000893.

Peters MD, Godfrey CM, Khalil H, McInerney P, Parker D, Soares CB. Guidance for conducting systematic scoping reviews. Int J Evid Healthcare. 2015;13(3):141–6.

Polanin JR, Pigott TD, Espelage DL, Grotpeter JK. Best practice guidelines for abstract screening large-evidence systematic reviews and meta-analyses. Res Synth Methods. 2019;10(3):330–42.

Article PubMed Central Google Scholar

Shea BJ, Grimshaw JM, Wells GA, et al. Development of AMSTAR: a measurement tool to assess the methodological quality of systematic reviews. BMC Med Res Methodol. 2007;7(1):1–7.

Shea BJ, Reeves BC, Wells G, et al. AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. Brit Med J. 2017;358

Sterne JA, Hernán MA, Reeves BC, et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. Br Med J. 2016;355

Stroup DF, Berlin JA, Morton SC, et al. Meta-analysis of observational studies in epidemiology: a proposal for reporting. JAMA. 2000;283(15):2008–12.

Tawfik GM, Dila KAS, Mohamed MYF, et al. A step by step guide for conducting a systematic review and meta-analysis with simulation data. Trop Med Health. 2019;47(1):1–9.

The Critical Appraisal Program. Critical appraisal skills program. Available at https://casp-uk.net/ . 2022. Accessed 29 Aug 2022.

The University of Melbourne. Writing a literature review in Research Techniques 2022. Available at https://students.unimelb.edu.au/academic-skills/explore-our-resources/research-techniques/reviewing-the-literature . Accessed 29 Aug 2022.

The Writing Center University of Winconsin-Madison. Learn how to write a literature review in The Writer’s Handbook – Academic Professional Writing. 2022. Available at https://writing.wisc.edu/handbook/assignments/reviewofliterature/ . Accessed 29 Aug 2022.

Thompson SG, Sharp SJ. Explaining heterogeneity in meta-analysis: a comparison of methods. Stat Med. 1999;18(20):2693–708.

Tricco AC, Lillie E, Zarin W, et al. A scoping review on the conduct and reporting of scoping reviews. BMC Med Res Methodol. 2016;16(1):15.

Tricco AC, Lillie E, Zarin W, et al. PRISMA extension for scoping reviews (PRISMA-ScR): checklist and explanation. Ann Intern Med. 2018;169(7):467–73.

Yoneoka D, Henmi M. Clinical heterogeneity in random-effect meta-analysis: between-study boundary estimate problem. Stat Med. 2019;38(21):4131–45.

Yuan Y, Hunt RH. Systematic reviews: the good, the bad, and the ugly. Am J Gastroenterol. 2009;104(5):1086–92.

Download references

Author information

Authors and affiliations.

Centre of Excellence in Treatable Traits, College of Health, Medicine and Wellbeing, University of Newcastle, Hunter Medical Research Institute Asthma and Breathing Programme, Newcastle, NSW, Australia

Dennis Thomas

Department of Pharmacy Practice, Faculty of Pharmacy, Universitas Airlangga, Surabaya, Indonesia

Elida Zairina

Centre for Medicine Use and Safety, Monash Institute of Pharmaceutical Sciences, Faculty of Pharmacy and Pharmaceutical Sciences, Monash University, Parkville, VIC, Australia

Johnson George

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Johnson George .

Section Editor information

College of Pharmacy, Qatar University, Doha, Qatar

Derek Charles Stewart

Department of Pharmacy, University of Huddersfield, Huddersfield, United Kingdom

Zaheer-Ud-Din Babar

Rights and permissions

Reprints and permissions

Copyright information

© 2023 Springer Nature Switzerland AG

About this entry

Cite this entry.

Thomas, D., Zairina, E., George, J. (2023). Methodological Approaches to Literature Review. In: Encyclopedia of Evidence in Pharmaceutical Public Health and Health Services Research in Pharmacy. Springer, Cham. https://doi.org/10.1007/978-3-030-50247-8_57-1

Download citation

DOI : https://doi.org/10.1007/978-3-030-50247-8_57-1

Received : 22 February 2023

Accepted : 22 February 2023

Published : 09 May 2023

Publisher Name : Springer, Cham

Print ISBN : 978-3-030-50247-8

Online ISBN : 978-3-030-50247-8

eBook Packages : Springer Reference Biomedicine and Life Sciences Reference Module Biomedical and Life Sciences

- Publish with us

Policies and ethics

- Find a journal

- Track your research

- USC Libraries

- Research Guides

Organizing Your Social Sciences Research Paper

- 5. The Literature Review

- Purpose of Guide

- Design Flaws to Avoid

- Independent and Dependent Variables

- Glossary of Research Terms

- Reading Research Effectively

- Narrowing a Topic Idea

- Broadening a Topic Idea

- Extending the Timeliness of a Topic Idea

- Academic Writing Style

- Applying Critical Thinking

- Choosing a Title

- Making an Outline

- Paragraph Development

- Research Process Video Series

- Executive Summary

- The C.A.R.S. Model

- Background Information

- The Research Problem/Question

- Theoretical Framework

- Citation Tracking

- Content Alert Services

- Evaluating Sources

- Primary Sources

- Secondary Sources

- Tiertiary Sources

- Scholarly vs. Popular Publications

- Qualitative Methods

- Quantitative Methods

- Insiderness

- Using Non-Textual Elements

- Limitations of the Study

- Common Grammar Mistakes

- Writing Concisely

- Avoiding Plagiarism

- Footnotes or Endnotes?

- Further Readings

- Generative AI and Writing

- USC Libraries Tutorials and Other Guides

- Bibliography

A literature review surveys prior research published in books, scholarly articles, and any other sources relevant to a particular issue, area of research, or theory, and by so doing, provides a description, summary, and critical evaluation of these works in relation to the research problem being investigated. Literature reviews are designed to provide an overview of sources you have used in researching a particular topic and to demonstrate to your readers how your research fits within existing scholarship about the topic.

Fink, Arlene. Conducting Research Literature Reviews: From the Internet to Paper . Fourth edition. Thousand Oaks, CA: SAGE, 2014.

Importance of a Good Literature Review

A literature review may consist of simply a summary of key sources, but in the social sciences, a literature review usually has an organizational pattern and combines both summary and synthesis, often within specific conceptual categories . A summary is a recap of the important information of the source, but a synthesis is a re-organization, or a reshuffling, of that information in a way that informs how you are planning to investigate a research problem. The analytical features of a literature review might:

- Give a new interpretation of old material or combine new with old interpretations,

- Trace the intellectual progression of the field, including major debates,

- Depending on the situation, evaluate the sources and advise the reader on the most pertinent or relevant research, or

- Usually in the conclusion of a literature review, identify where gaps exist in how a problem has been researched to date.

Given this, the purpose of a literature review is to:

- Place each work in the context of its contribution to understanding the research problem being studied.

- Describe the relationship of each work to the others under consideration.

- Identify new ways to interpret prior research.

- Reveal any gaps that exist in the literature.

- Resolve conflicts amongst seemingly contradictory previous studies.

- Identify areas of prior scholarship to prevent duplication of effort.

- Point the way in fulfilling a need for additional research.

- Locate your own research within the context of existing literature [very important].

Fink, Arlene. Conducting Research Literature Reviews: From the Internet to Paper. 2nd ed. Thousand Oaks, CA: Sage, 2005; Hart, Chris. Doing a Literature Review: Releasing the Social Science Research Imagination . Thousand Oaks, CA: Sage Publications, 1998; Jesson, Jill. Doing Your Literature Review: Traditional and Systematic Techniques . Los Angeles, CA: SAGE, 2011; Knopf, Jeffrey W. "Doing a Literature Review." PS: Political Science and Politics 39 (January 2006): 127-132; Ridley, Diana. The Literature Review: A Step-by-Step Guide for Students . 2nd ed. Los Angeles, CA: SAGE, 2012.

Types of Literature Reviews

It is important to think of knowledge in a given field as consisting of three layers. First, there are the primary studies that researchers conduct and publish. Second are the reviews of those studies that summarize and offer new interpretations built from and often extending beyond the primary studies. Third, there are the perceptions, conclusions, opinion, and interpretations that are shared informally among scholars that become part of the body of epistemological traditions within the field.

In composing a literature review, it is important to note that it is often this third layer of knowledge that is cited as "true" even though it often has only a loose relationship to the primary studies and secondary literature reviews. Given this, while literature reviews are designed to provide an overview and synthesis of pertinent sources you have explored, there are a number of approaches you could adopt depending upon the type of analysis underpinning your study.

Argumentative Review This form examines literature selectively in order to support or refute an argument, deeply embedded assumption, or philosophical problem already established in the literature. The purpose is to develop a body of literature that establishes a contrarian viewpoint. Given the value-laden nature of some social science research [e.g., educational reform; immigration control], argumentative approaches to analyzing the literature can be a legitimate and important form of discourse. However, note that they can also introduce problems of bias when they are used to make summary claims of the sort found in systematic reviews [see below].

Integrative Review Considered a form of research that reviews, critiques, and synthesizes representative literature on a topic in an integrated way such that new frameworks and perspectives on the topic are generated. The body of literature includes all studies that address related or identical hypotheses or research problems. A well-done integrative review meets the same standards as primary research in regard to clarity, rigor, and replication. This is the most common form of review in the social sciences.

Historical Review Few things rest in isolation from historical precedent. Historical literature reviews focus on examining research throughout a period of time, often starting with the first time an issue, concept, theory, phenomena emerged in the literature, then tracing its evolution within the scholarship of a discipline. The purpose is to place research in a historical context to show familiarity with state-of-the-art developments and to identify the likely directions for future research.

Methodological Review A review does not always focus on what someone said [findings], but how they came about saying what they say [method of analysis]. Reviewing methods of analysis provides a framework of understanding at different levels [i.e. those of theory, substantive fields, research approaches, and data collection and analysis techniques], how researchers draw upon a wide variety of knowledge ranging from the conceptual level to practical documents for use in fieldwork in the areas of ontological and epistemological consideration, quantitative and qualitative integration, sampling, interviewing, data collection, and data analysis. This approach helps highlight ethical issues which you should be aware of and consider as you go through your own study.

Systematic Review This form consists of an overview of existing evidence pertinent to a clearly formulated research question, which uses pre-specified and standardized methods to identify and critically appraise relevant research, and to collect, report, and analyze data from the studies that are included in the review. The goal is to deliberately document, critically evaluate, and summarize scientifically all of the research about a clearly defined research problem . Typically it focuses on a very specific empirical question, often posed in a cause-and-effect form, such as "To what extent does A contribute to B?" This type of literature review is primarily applied to examining prior research studies in clinical medicine and allied health fields, but it is increasingly being used in the social sciences.

Theoretical Review The purpose of this form is to examine the corpus of theory that has accumulated in regard to an issue, concept, theory, phenomena. The theoretical literature review helps to establish what theories already exist, the relationships between them, to what degree the existing theories have been investigated, and to develop new hypotheses to be tested. Often this form is used to help establish a lack of appropriate theories or reveal that current theories are inadequate for explaining new or emerging research problems. The unit of analysis can focus on a theoretical concept or a whole theory or framework.

NOTE : Most often the literature review will incorporate some combination of types. For example, a review that examines literature supporting or refuting an argument, assumption, or philosophical problem related to the research problem will also need to include writing supported by sources that establish the history of these arguments in the literature.

Baumeister, Roy F. and Mark R. Leary. "Writing Narrative Literature Reviews." Review of General Psychology 1 (September 1997): 311-320; Mark R. Fink, Arlene. Conducting Research Literature Reviews: From the Internet to Paper . 2nd ed. Thousand Oaks, CA: Sage, 2005; Hart, Chris. Doing a Literature Review: Releasing the Social Science Research Imagination . Thousand Oaks, CA: Sage Publications, 1998; Kennedy, Mary M. "Defining a Literature." Educational Researcher 36 (April 2007): 139-147; Petticrew, Mark and Helen Roberts. Systematic Reviews in the Social Sciences: A Practical Guide . Malden, MA: Blackwell Publishers, 2006; Torracro, Richard. "Writing Integrative Literature Reviews: Guidelines and Examples." Human Resource Development Review 4 (September 2005): 356-367; Rocco, Tonette S. and Maria S. Plakhotnik. "Literature Reviews, Conceptual Frameworks, and Theoretical Frameworks: Terms, Functions, and Distinctions." Human Ressource Development Review 8 (March 2008): 120-130; Sutton, Anthea. Systematic Approaches to a Successful Literature Review . Los Angeles, CA: Sage Publications, 2016.

Structure and Writing Style

I. Thinking About Your Literature Review

The structure of a literature review should include the following in support of understanding the research problem :

- An overview of the subject, issue, or theory under consideration, along with the objectives of the literature review,

- Division of works under review into themes or categories [e.g. works that support a particular position, those against, and those offering alternative approaches entirely],

- An explanation of how each work is similar to and how it varies from the others,

- Conclusions as to which pieces are best considered in their argument, are most convincing of their opinions, and make the greatest contribution to the understanding and development of their area of research.

The critical evaluation of each work should consider :

- Provenance -- what are the author's credentials? Are the author's arguments supported by evidence [e.g. primary historical material, case studies, narratives, statistics, recent scientific findings]?

- Methodology -- were the techniques used to identify, gather, and analyze the data appropriate to addressing the research problem? Was the sample size appropriate? Were the results effectively interpreted and reported?

- Objectivity -- is the author's perspective even-handed or prejudicial? Is contrary data considered or is certain pertinent information ignored to prove the author's point?

- Persuasiveness -- which of the author's theses are most convincing or least convincing?

- Validity -- are the author's arguments and conclusions convincing? Does the work ultimately contribute in any significant way to an understanding of the subject?

II. Development of the Literature Review

Four Basic Stages of Writing 1. Problem formulation -- which topic or field is being examined and what are its component issues? 2. Literature search -- finding materials relevant to the subject being explored. 3. Data evaluation -- determining which literature makes a significant contribution to the understanding of the topic. 4. Analysis and interpretation -- discussing the findings and conclusions of pertinent literature.

Consider the following issues before writing the literature review: Clarify If your assignment is not specific about what form your literature review should take, seek clarification from your professor by asking these questions: 1. Roughly how many sources would be appropriate to include? 2. What types of sources should I review (books, journal articles, websites; scholarly versus popular sources)? 3. Should I summarize, synthesize, or critique sources by discussing a common theme or issue? 4. Should I evaluate the sources in any way beyond evaluating how they relate to understanding the research problem? 5. Should I provide subheadings and other background information, such as definitions and/or a history? Find Models Use the exercise of reviewing the literature to examine how authors in your discipline or area of interest have composed their literature review sections. Read them to get a sense of the types of themes you might want to look for in your own research or to identify ways to organize your final review. The bibliography or reference section of sources you've already read, such as required readings in the course syllabus, are also excellent entry points into your own research. Narrow the Topic The narrower your topic, the easier it will be to limit the number of sources you need to read in order to obtain a good survey of relevant resources. Your professor will probably not expect you to read everything that's available about the topic, but you'll make the act of reviewing easier if you first limit scope of the research problem. A good strategy is to begin by searching the USC Libraries Catalog for recent books about the topic and review the table of contents for chapters that focuses on specific issues. You can also review the indexes of books to find references to specific issues that can serve as the focus of your research. For example, a book surveying the history of the Israeli-Palestinian conflict may include a chapter on the role Egypt has played in mediating the conflict, or look in the index for the pages where Egypt is mentioned in the text. Consider Whether Your Sources are Current Some disciplines require that you use information that is as current as possible. This is particularly true in disciplines in medicine and the sciences where research conducted becomes obsolete very quickly as new discoveries are made. However, when writing a review in the social sciences, a survey of the history of the literature may be required. In other words, a complete understanding the research problem requires you to deliberately examine how knowledge and perspectives have changed over time. Sort through other current bibliographies or literature reviews in the field to get a sense of what your discipline expects. You can also use this method to explore what is considered by scholars to be a "hot topic" and what is not.

III. Ways to Organize Your Literature Review

Chronology of Events If your review follows the chronological method, you could write about the materials according to when they were published. This approach should only be followed if a clear path of research building on previous research can be identified and that these trends follow a clear chronological order of development. For example, a literature review that focuses on continuing research about the emergence of German economic power after the fall of the Soviet Union. By Publication Order your sources by publication chronology, then, only if the order demonstrates a more important trend. For instance, you could order a review of literature on environmental studies of brown fields if the progression revealed, for example, a change in the soil collection practices of the researchers who wrote and/or conducted the studies. Thematic [“conceptual categories”] A thematic literature review is the most common approach to summarizing prior research in the social and behavioral sciences. Thematic reviews are organized around a topic or issue, rather than the progression of time, although the progression of time may still be incorporated into a thematic review. For example, a review of the Internet’s impact on American presidential politics could focus on the development of online political satire. While the study focuses on one topic, the Internet’s impact on American presidential politics, it would still be organized chronologically reflecting technological developments in media. The difference in this example between a "chronological" and a "thematic" approach is what is emphasized the most: themes related to the role of the Internet in presidential politics. Note that more authentic thematic reviews tend to break away from chronological order. A review organized in this manner would shift between time periods within each section according to the point being made. Methodological A methodological approach focuses on the methods utilized by the researcher. For the Internet in American presidential politics project, one methodological approach would be to look at cultural differences between the portrayal of American presidents on American, British, and French websites. Or the review might focus on the fundraising impact of the Internet on a particular political party. A methodological scope will influence either the types of documents in the review or the way in which these documents are discussed.

Other Sections of Your Literature Review Once you've decided on the organizational method for your literature review, the sections you need to include in the paper should be easy to figure out because they arise from your organizational strategy. In other words, a chronological review would have subsections for each vital time period; a thematic review would have subtopics based upon factors that relate to the theme or issue. However, sometimes you may need to add additional sections that are necessary for your study, but do not fit in the organizational strategy of the body. What other sections you include in the body is up to you. However, only include what is necessary for the reader to locate your study within the larger scholarship about the research problem.

Here are examples of other sections, usually in the form of a single paragraph, you may need to include depending on the type of review you write:

- Current Situation : Information necessary to understand the current topic or focus of the literature review.

- Sources Used : Describes the methods and resources [e.g., databases] you used to identify the literature you reviewed.

- History : The chronological progression of the field, the research literature, or an idea that is necessary to understand the literature review, if the body of the literature review is not already a chronology.

- Selection Methods : Criteria you used to select (and perhaps exclude) sources in your literature review. For instance, you might explain that your review includes only peer-reviewed [i.e., scholarly] sources.

- Standards : Description of the way in which you present your information.

- Questions for Further Research : What questions about the field has the review sparked? How will you further your research as a result of the review?

IV. Writing Your Literature Review

Once you've settled on how to organize your literature review, you're ready to write each section. When writing your review, keep in mind these issues.

Use Evidence A literature review section is, in this sense, just like any other academic research paper. Your interpretation of the available sources must be backed up with evidence [citations] that demonstrates that what you are saying is valid. Be Selective Select only the most important points in each source to highlight in the review. The type of information you choose to mention should relate directly to the research problem, whether it is thematic, methodological, or chronological. Related items that provide additional information, but that are not key to understanding the research problem, can be included in a list of further readings . Use Quotes Sparingly Some short quotes are appropriate if you want to emphasize a point, or if what an author stated cannot be easily paraphrased. Sometimes you may need to quote certain terminology that was coined by the author, is not common knowledge, or taken directly from the study. Do not use extensive quotes as a substitute for using your own words in reviewing the literature. Summarize and Synthesize Remember to summarize and synthesize your sources within each thematic paragraph as well as throughout the review. Recapitulate important features of a research study, but then synthesize it by rephrasing the study's significance and relating it to your own work and the work of others. Keep Your Own Voice While the literature review presents others' ideas, your voice [the writer's] should remain front and center. For example, weave references to other sources into what you are writing but maintain your own voice by starting and ending the paragraph with your own ideas and wording. Use Caution When Paraphrasing When paraphrasing a source that is not your own, be sure to represent the author's information or opinions accurately and in your own words. Even when paraphrasing an author’s work, you still must provide a citation to that work.

V. Common Mistakes to Avoid

These are the most common mistakes made in reviewing social science research literature.

- Sources in your literature review do not clearly relate to the research problem;

- You do not take sufficient time to define and identify the most relevant sources to use in the literature review related to the research problem;

- Relies exclusively on secondary analytical sources rather than including relevant primary research studies or data;

- Uncritically accepts another researcher's findings and interpretations as valid, rather than examining critically all aspects of the research design and analysis;

- Does not describe the search procedures that were used in identifying the literature to review;

- Reports isolated statistical results rather than synthesizing them in chi-squared or meta-analytic methods; and,

- Only includes research that validates assumptions and does not consider contrary findings and alternative interpretations found in the literature.

Cook, Kathleen E. and Elise Murowchick. “Do Literature Review Skills Transfer from One Course to Another?” Psychology Learning and Teaching 13 (March 2014): 3-11; Fink, Arlene. Conducting Research Literature Reviews: From the Internet to Paper . 2nd ed. Thousand Oaks, CA: Sage, 2005; Hart, Chris. Doing a Literature Review: Releasing the Social Science Research Imagination . Thousand Oaks, CA: Sage Publications, 1998; Jesson, Jill. Doing Your Literature Review: Traditional and Systematic Techniques . London: SAGE, 2011; Literature Review Handout. Online Writing Center. Liberty University; Literature Reviews. The Writing Center. University of North Carolina; Onwuegbuzie, Anthony J. and Rebecca Frels. Seven Steps to a Comprehensive Literature Review: A Multimodal and Cultural Approach . Los Angeles, CA: SAGE, 2016; Ridley, Diana. The Literature Review: A Step-by-Step Guide for Students . 2nd ed. Los Angeles, CA: SAGE, 2012; Randolph, Justus J. “A Guide to Writing the Dissertation Literature Review." Practical Assessment, Research, and Evaluation. vol. 14, June 2009; Sutton, Anthea. Systematic Approaches to a Successful Literature Review . Los Angeles, CA: Sage Publications, 2016; Taylor, Dena. The Literature Review: A Few Tips On Conducting It. University College Writing Centre. University of Toronto; Writing a Literature Review. Academic Skills Centre. University of Canberra.

Writing Tip

Break Out of Your Disciplinary Box!

Thinking interdisciplinarily about a research problem can be a rewarding exercise in applying new ideas, theories, or concepts to an old problem. For example, what might cultural anthropologists say about the continuing conflict in the Middle East? In what ways might geographers view the need for better distribution of social service agencies in large cities than how social workers might study the issue? You don’t want to substitute a thorough review of core research literature in your discipline for studies conducted in other fields of study. However, particularly in the social sciences, thinking about research problems from multiple vectors is a key strategy for finding new solutions to a problem or gaining a new perspective. Consult with a librarian about identifying research databases in other disciplines; almost every field of study has at least one comprehensive database devoted to indexing its research literature.

Frodeman, Robert. The Oxford Handbook of Interdisciplinarity . New York: Oxford University Press, 2010.

Another Writing Tip

Don't Just Review for Content!

While conducting a review of the literature, maximize the time you devote to writing this part of your paper by thinking broadly about what you should be looking for and evaluating. Review not just what scholars are saying, but how are they saying it. Some questions to ask:

- How are they organizing their ideas?

- What methods have they used to study the problem?

- What theories have been used to explain, predict, or understand their research problem?

- What sources have they cited to support their conclusions?

- How have they used non-textual elements [e.g., charts, graphs, figures, etc.] to illustrate key points?

When you begin to write your literature review section, you'll be glad you dug deeper into how the research was designed and constructed because it establishes a means for developing more substantial analysis and interpretation of the research problem.

Hart, Chris. Doing a Literature Review: Releasing the Social Science Research Imagination . Thousand Oaks, CA: Sage Publications, 1 998.

Yet Another Writing Tip

When Do I Know I Can Stop Looking and Move On?

Here are several strategies you can utilize to assess whether you've thoroughly reviewed the literature:

- Look for repeating patterns in the research findings . If the same thing is being said, just by different people, then this likely demonstrates that the research problem has hit a conceptual dead end. At this point consider: Does your study extend current research? Does it forge a new path? Or, does is merely add more of the same thing being said?

- Look at sources the authors cite to in their work . If you begin to see the same researchers cited again and again, then this is often an indication that no new ideas have been generated to address the research problem.

- Search Google Scholar to identify who has subsequently cited leading scholars already identified in your literature review [see next sub-tab]. This is called citation tracking and there are a number of sources that can help you identify who has cited whom, particularly scholars from outside of your discipline. Here again, if the same authors are being cited again and again, this may indicate no new literature has been written on the topic.

Onwuegbuzie, Anthony J. and Rebecca Frels. Seven Steps to a Comprehensive Literature Review: A Multimodal and Cultural Approach . Los Angeles, CA: Sage, 2016; Sutton, Anthea. Systematic Approaches to a Successful Literature Review . Los Angeles, CA: Sage Publications, 2016.

- << Previous: Theoretical Framework

- Next: Citation Tracking >>

- Last Updated: Apr 18, 2024 12:20 PM

- URL: https://libguides.usc.edu/writingguide

- University of Texas Libraries

Literature Reviews

- What is a literature review?

- Steps in the Literature Review Process

- Define your research question

- Determine inclusion and exclusion criteria

- Choose databases and search

- Review Results

- Synthesize Results

- Analyze Results

- Librarian Support

What is a Literature Review?

A literature or narrative review is a comprehensive review and analysis of the published literature on a specific topic or research question. The literature that is reviewed contains: books, articles, academic articles, conference proceedings, association papers, and dissertations. It contains the most pertinent studies and points to important past and current research and practices. It provides background and context, and shows how your research will contribute to the field.

A literature review should:

- Provide a comprehensive and updated review of the literature;

- Explain why this review has taken place;

- Articulate a position or hypothesis;

- Acknowledge and account for conflicting and corroborating points of view

From S age Research Methods

Purpose of a Literature Review

A literature review can be written as an introduction to a study to:

- Demonstrate how a study fills a gap in research

- Compare a study with other research that's been done

Or it can be a separate work (a research article on its own) which:

- Organizes or describes a topic

- Describes variables within a particular issue/problem

Limitations of a Literature Review

Some of the limitations of a literature review are:

- It's a snapshot in time. Unlike other reviews, this one has beginning, a middle and an end. There may be future developments that could make your work less relevant.

- It may be too focused. Some niche studies may miss the bigger picture.

- It can be difficult to be comprehensive. There is no way to make sure all the literature on a topic was considered.

- It is easy to be biased if you stick to top tier journals. There may be other places where people are publishing exemplary research. Look to open access publications and conferences to reflect a more inclusive collection. Also, make sure to include opposing views (and not just supporting evidence).

Source: Grant, Maria J., and Andrew Booth. “A Typology of Reviews: An Analysis of 14 Review Types and Associated Methodologies.” Health Information & Libraries Journal, vol. 26, no. 2, June 2009, pp. 91–108. Wiley Online Library, doi:10.1111/j.1471-1842.2009.00848.x.

Meryl Brodsky : Communication and Information Studies

Hannah Chapman Tripp : Biology, Neuroscience

Carolyn Cunningham : Human Development & Family Sciences, Psychology, Sociology

Larayne Dallas : Engineering

Janelle Hedstrom : Special Education, Curriculum & Instruction, Ed Leadership & Policy

Susan Macicak : Linguistics

Imelda Vetter : Dell Medical School

For help in other subject areas, please see the guide to library specialists by subject .

Periodically, UT Libraries runs a workshop covering the basics and library support for literature reviews. While we try to offer these once per academic year, we find providing the recording to be helpful to community members who have missed the session. Following is the most recent recording of the workshop, Conducting a Literature Review. To view the recording, a UT login is required.

- October 26, 2022 recording

- Last Updated: Oct 26, 2022 2:49 PM

- URL: https://guides.lib.utexas.edu/literaturereviews

- Open access

- Published: 14 August 2018

Defining the process to literature searching in systematic reviews: a literature review of guidance and supporting studies

- Chris Cooper ORCID: orcid.org/0000-0003-0864-5607 1 ,

- Andrew Booth 2 ,

- Jo Varley-Campbell 1 ,

- Nicky Britten 3 &

- Ruth Garside 4

BMC Medical Research Methodology volume 18 , Article number: 85 ( 2018 ) Cite this article

200k Accesses

203 Citations

118 Altmetric

Metrics details

Systematic literature searching is recognised as a critical component of the systematic review process. It involves a systematic search for studies and aims for a transparent report of study identification, leaving readers clear about what was done to identify studies, and how the findings of the review are situated in the relevant evidence.

Information specialists and review teams appear to work from a shared and tacit model of the literature search process. How this tacit model has developed and evolved is unclear, and it has not been explicitly examined before.

The purpose of this review is to determine if a shared model of the literature searching process can be detected across systematic review guidance documents and, if so, how this process is reported in the guidance and supported by published studies.

A literature review.

Two types of literature were reviewed: guidance and published studies. Nine guidance documents were identified, including: The Cochrane and Campbell Handbooks. Published studies were identified through ‘pearl growing’, citation chasing, a search of PubMed using the systematic review methods filter, and the authors’ topic knowledge.

The relevant sections within each guidance document were then read and re-read, with the aim of determining key methodological stages. Methodological stages were identified and defined. This data was reviewed to identify agreements and areas of unique guidance between guidance documents. Consensus across multiple guidance documents was used to inform selection of ‘key stages’ in the process of literature searching.

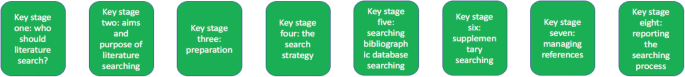

Eight key stages were determined relating specifically to literature searching in systematic reviews. They were: who should literature search, aims and purpose of literature searching, preparation, the search strategy, searching databases, supplementary searching, managing references and reporting the search process.

Conclusions

Eight key stages to the process of literature searching in systematic reviews were identified. These key stages are consistently reported in the nine guidance documents, suggesting consensus on the key stages of literature searching, and therefore the process of literature searching as a whole, in systematic reviews. Further research to determine the suitability of using the same process of literature searching for all types of systematic review is indicated.

Peer Review reports

Systematic literature searching is recognised as a critical component of the systematic review process. It involves a systematic search for studies and aims for a transparent report of study identification, leaving review stakeholders clear about what was done to identify studies, and how the findings of the review are situated in the relevant evidence.

Information specialists and review teams appear to work from a shared and tacit model of the literature search process. How this tacit model has developed and evolved is unclear, and it has not been explicitly examined before. This is in contrast to the information science literature, which has developed information processing models as an explicit basis for dialogue and empirical testing. Without an explicit model, research in the process of systematic literature searching will remain immature and potentially uneven, and the development of shared information models will be assumed but never articulated.

One way of developing such a conceptual model is by formally examining the implicit “programme theory” as embodied in key methodological texts. The aim of this review is therefore to determine if a shared model of the literature searching process in systematic reviews can be detected across guidance documents and, if so, how this process is reported and supported.

Identifying guidance

Key texts (henceforth referred to as “guidance”) were identified based upon their accessibility to, and prominence within, United Kingdom systematic reviewing practice. The United Kingdom occupies a prominent position in the science of health information retrieval, as quantified by such objective measures as the authorship of papers, the number of Cochrane groups based in the UK, membership and leadership of groups such as the Cochrane Information Retrieval Methods Group, the HTA-I Information Specialists’ Group and historic association with such centres as the UK Cochrane Centre, the NHS Centre for Reviews and Dissemination, the Centre for Evidence Based Medicine and the National Institute for Clinical Excellence (NICE). Coupled with the linguistic dominance of English within medical and health science and the science of systematic reviews more generally, this offers a justification for a purposive sample that favours UK, European and Australian guidance documents.

Nine guidance documents were identified. These documents provide guidance for different types of reviews, namely: reviews of interventions, reviews of health technologies, reviews of qualitative research studies, reviews of social science topics, and reviews to inform guidance.

Whilst these guidance documents occasionally offer additional guidance on other types of systematic reviews, we have focused on the core and stated aims of these documents as they relate to literature searching. Table 1 sets out: the guidance document, the version audited, their core stated focus, and a bibliographical pointer to the main guidance relating to literature searching.

Once a list of key guidance documents was determined, it was checked by six senior information professionals based in the UK for relevance to current literature searching in systematic reviews.

Identifying supporting studies

In addition to identifying guidance, the authors sought to populate an evidence base of supporting studies (henceforth referred to as “studies”) that contribute to existing search practice. Studies were first identified by the authors from their knowledge on this topic area and, subsequently, through systematic citation chasing key studies (‘pearls’ [ 1 ]) located within each key stage of the search process. These studies are identified in Additional file 1 : Appendix Table 1. Citation chasing was conducted by analysing the bibliography of references for each study (backwards citation chasing) and through Google Scholar (forward citation chasing). A search of PubMed using the systematic review methods filter was undertaken in August 2017 (see Additional file 1 ). The search terms used were: (literature search*[Title/Abstract]) AND sysrev_methods[sb] and 586 results were returned. These results were sifted for relevance to the key stages in Fig. 1 by CC.

The key stages of literature search guidance as identified from nine key texts

Extracting the data

To reveal the implicit process of literature searching within each guidance document, the relevant sections (chapters) on literature searching were read and re-read, with the aim of determining key methodological stages. We defined a key methodological stage as a distinct step in the overall process for which specific guidance is reported, and action is taken, that collectively would result in a completed literature search.

The chapter or section sub-heading for each methodological stage was extracted into a table using the exact language as reported in each guidance document. The lead author (CC) then read and re-read these data, and the paragraphs of the document to which the headings referred, summarising section details. This table was then reviewed, using comparison and contrast to identify agreements and areas of unique guidance. Consensus across multiple guidelines was used to inform selection of ‘key stages’ in the process of literature searching.

Having determined the key stages to literature searching, we then read and re-read the sections relating to literature searching again, extracting specific detail relating to the methodological process of literature searching within each key stage. Again, the guidance was then read and re-read, first on a document-by-document-basis and, secondly, across all the documents above, to identify both commonalities and areas of unique guidance.

Results and discussion

Our findings.